At WWDC on June 10, Apple took the wraps off its ambitious project to inject generative AI features throughout its operating systems. Apple calls this Apple Intelligence, and it’s going to transform the way you use your iPhone, iPad, and Mac—but it’s also got significant limitations and caveats.

Here’s everything you need to know about Apple Intelligence before it lands on your devices: What it is, what it does, how it works, when it’s coming, and what you’ll need to be able to use it.

What is Apple Intelligence?

Apple Intelligence is Apple’s branded term for its suite of generative AI features coming first in iOS 18, iPadOS 18, and macOS 15 Sequoia. Because Apple has been using machine learning and “artificial intelligence” in its products for years (though not generative AI), and because Apple is a company that can never pass up a good branding opportunity, it took “AI” and gave it a snazzy new name.

Apple Intelligence involves several features including some centered around reading and writing, some for image generation, and a few other things. It’s a way of getting things done quicker through voice and text prompts that draw on personal context and understanding to deliver results quickly and privately.

When will Apple Intelligence be released?

Apple Intelligence will debut in beta state when iOS 18, iPadOS 18, and macOS 15 Sequoia are released later in 2024 (probably September). It was not included in the initial Developer Beta released at WWDC, but Apple says it will be available later in the summer as part of the beta program.

The first release will be only in the English language and likely only in some regions, and Apple has said that not all features will be available in the initial public launch. Additional features and languages will roll out over the coming year.

What are the features of Apple Intelligence?

Apple Intelligence, in this first year’s release, is a broad set of features that can be loosely categorized into four groups: Siri, writing, images, and summaries/organization.

Siri

Siri will be vastly improved with Apple Intelligence. It will be more natural and easier to talk to using normal speech, even if you mess up your words. It will use context about you from throughout your iPhone–photos, messages, contacts, locations, and more–to give results that are specific to you, personally. It’s such a big upgrade that Apple calls it, “The start of a new era for Siri.”

Siri will remember context from one command to the next so you won’t need to summon Siri a second or third time to do more than one task. It can also perform lots of new actions within apps. Apple is adding a lot to its “App Intents” which is how apps–including third-party apps–integrate with Siri. Siri will also be able to look at the screen and understand what’s on it, so you can give it commands related to what you’re looking at. If your friend messages you his address, you can say “Siri, save this address to his contact info” and it will see the address on the screen to know what you mean, and know who “his” is from the context of the message.

Apple

Writing

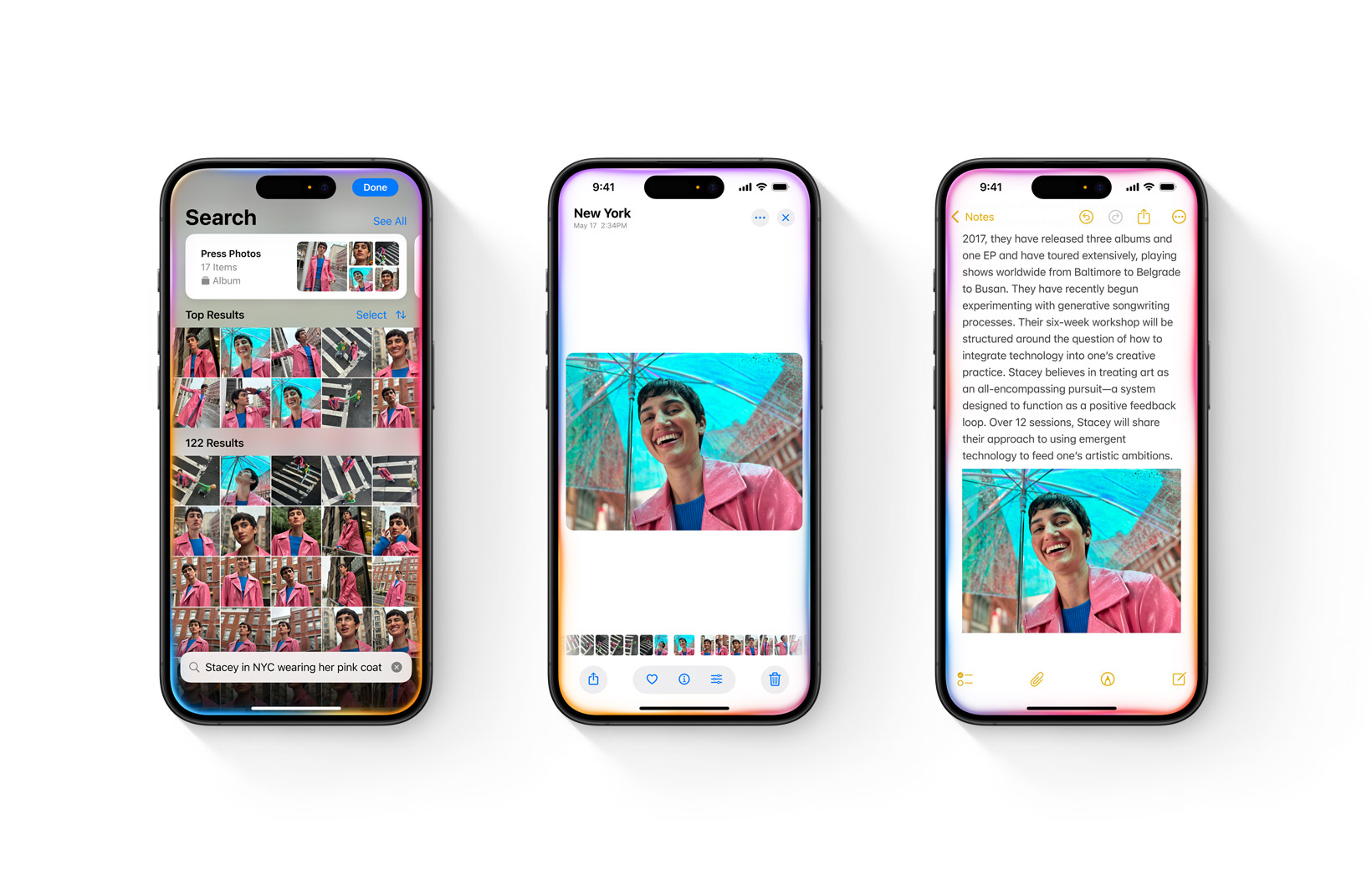

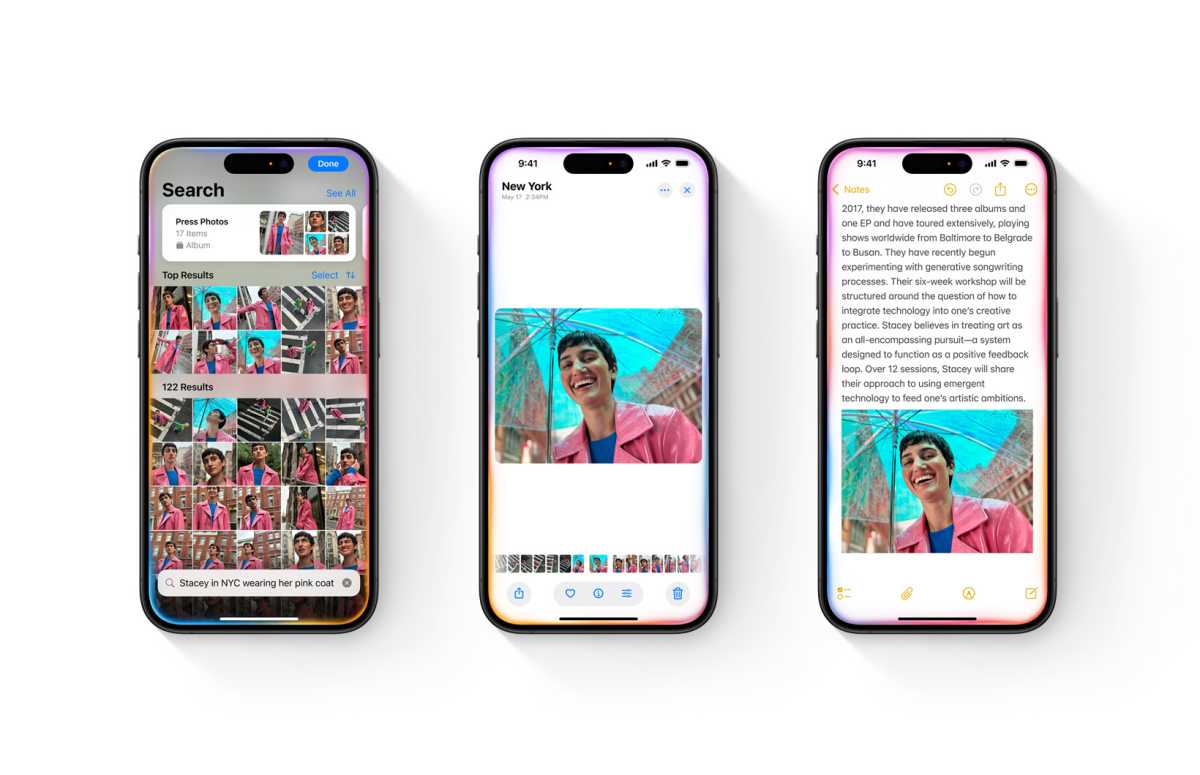

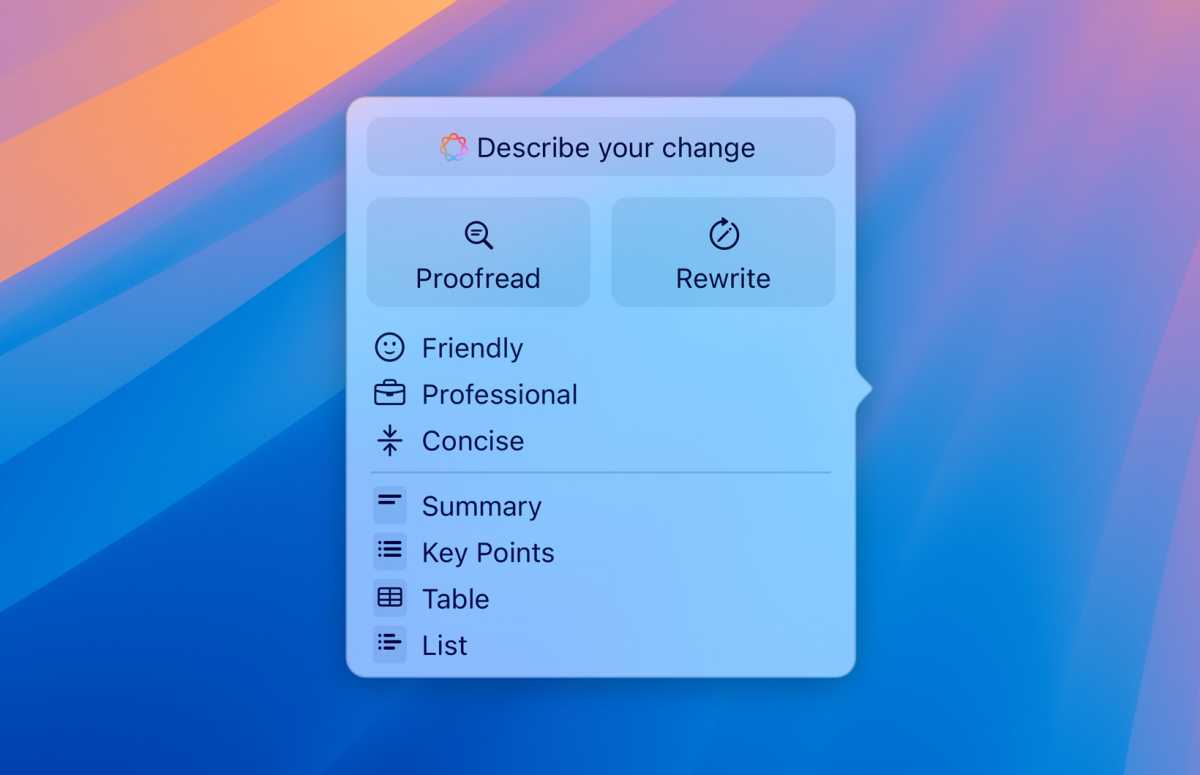

Almost anywhere in the system in which you write (Messages, Mail, Notes, web forums, you name it) you’ll be able to quickly call up new AI-powered writing tools to make it easier to say what you want how you want to. The tools can take selected text and change its style (friendly, professional, concise), create summaries or lists, or just proofread it for spelling and grammar.

If you want to generate new text, smart replies can take a few contextual bits of information provided by you and craft an appropriate response. Say someone sends you an email inviting you to their holiday cookout. You can provide simple info like whether you’re going or not, when you’ll be there, or offering to bring something, and the system will create a whole reply email for you.

Apple

Images

Apple is including image generation tools in Apple Intelligence. It can create new images in three styles: sketch, illustration, and animation. The lack of realistic photographic depictions seems like a safety choice. You can type a description to get an image or start with a rough sketch of what you want. You can even take an image from your photos library as a starting point or create a “Genmoji” out of people in your photos or contacts.

There’s a new dedicated app called Image Playground where you can experiment with all these image-generation tools, but they’re also available throughout the system. For example, you can circle a sketch or even a space in Notes using the new Image Wand feature to use Apple Intelligence to generate a properly contextual image there. Make an image of someone you know in an iMessage thread that is relevant to your conversation. Apple is creating APIs for third-party developers to use these tools in their apps, too.

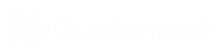

Apple Intelligence’s enhanced image understanding shows up in other ways, too. You can get very specific when searching your photos, with prompts like “Show photos of Charlotte from last summer when she was wearing sunglasses.” Finally, a new Clean Up tool in the Photos app lets you instantly remove unwanted objects from the background of your photos.

Apple

Summaries and organization

Apple Intelligence has a much greater understanding of language, so it can do a better job of understanding and presenting all the text you deal with.

Your Mail inbox, for example, can show summaries of emails instead of just the first few lines, so it’s easier to find the one you’re looking for. Apple Intelligence understands the content of your emails and will automatically place them into categories (Primary, Transactions, Updates, and Promotions), and build “digests” of emails from the same sender. Important emails can be discovered and bumped up to a list at the top.

Safari’s reader mode can summarize web pages. It can find priority notifications and show them in a brief list, with summaries, at the top of your notification stack. You can even engage a Focus mode setting that checks notifications as they come in and will silence most, but let through the ones that seem like they could be important.

A great example of how AI will permeate the system can be found in the Phone app, where you’ll be able to record any call (both parties will be notified) and save a transcript and summary of it, which can also help the AI find it later or know what you talked about to deliver more personal results.

How does Apple Intelligence differ from other AI?

Apple Intelligence has many qualities similar to other generative AI, but Apple stresses several qualities that make it stand out.

First is Apple’s focus on privacy. All the features described here run mostly on-device, using Apple’s advanced hardware and silicon, so your data never actually goes anywhere. Apple is not “scooping up” your information to sell or train their AI models.

When something needs to be done that requires a bigger and more complex model than can be run on-device, Apple employs a new private cloud architecture called Private Cloud Compute that uses its own hardware. Only the very specific data necessary to complete your request is sent in a secure fashion, and after your request is completed the data is discarded. Apple has promised to make the server code accessible to outside security researchers who can audit it to make sure Apple is keeping its privacy promise.

Second, Apple Intelligence is personal rather than general. Because it runs mostly on the device and the cloud implementation is very private and fleeting, it can build a knowledge graph about you using all sorts of information on your iPhone–locations, photos, messages, mail, contacts, and much more. This enables it to give answers and produce results that are specific to your life and not just generalized.

And finally, it’s deeply integrated throughout the system, available in most of Apple’s apps but with tools and APIs to allow developers to use the tools within their own apps.

Apple acknowledges that those popular AI chatbots have a massive base of general knowledge and more information about things like current events. So it’s not locking them out—on the contrary, it’s inviting them in.

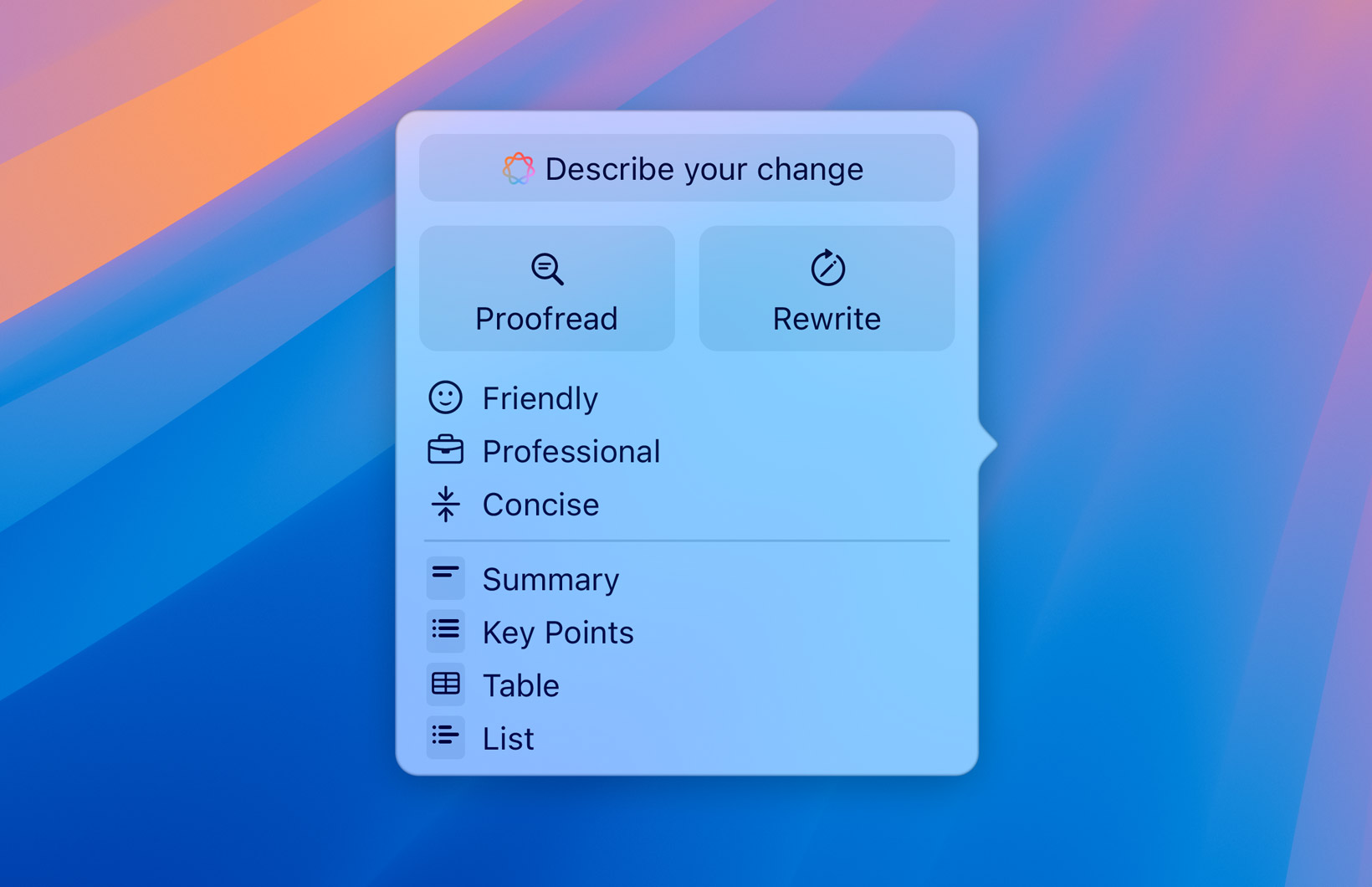

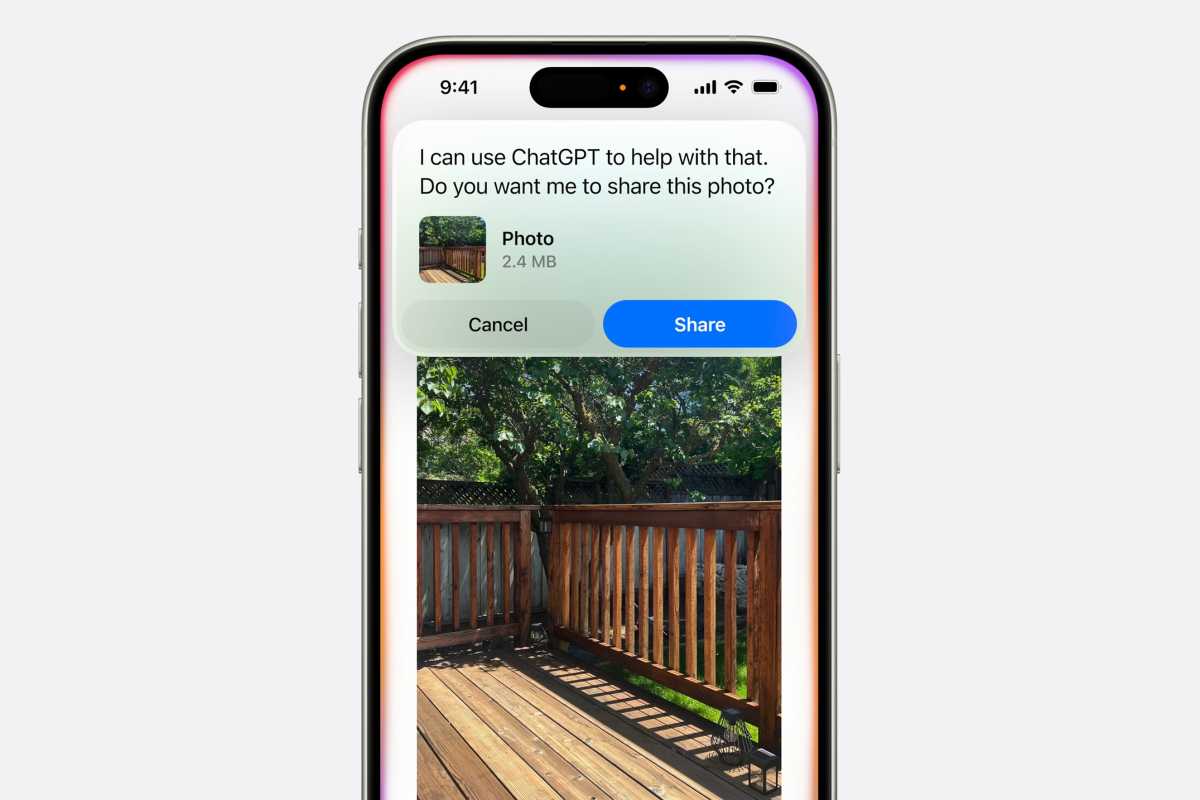

Siri will work seamlessly with the ChatGPT-4o model to answer complex questions or those that require broad general knowledge rather than personal info about you.

Just ask Siri anything and, if it needs to hand the query off to ChatGPT, it will first ask you if that’s OK (and if you’re providing an image, if it’s okay to send it), and if you allow it, you’ll get an immediate ChatGPT response with no need to install an app, log in to anything, or register a ChatGPT account. However, if you do have a ChatGPT subscription, you’ll have access to the more advanced features you pay for.

Apple

While ChatGPT is the first AI integration, Apple promises to allow others in the future such as Google Gemini, which was rumored to be in talks with Apple ahead of WWDC. It wouldn’t be surprising to see other AI applications join Apple Intelligence quickly.

Once again, we should note that Apple will always ask before sharing any data with ChatGPT and that the company’s arrangement with OpenAI stipulates that IP addresses will be obscured and no data will be saved.

What devices do I need to use Apple Intelligence?

Unfortunately, all this powerful local generative AI has a steep hardware cost. If you have an iPhone, you’ll need an A17 Pro (or newer) processor, which means only the iPhone 15 Pro and iPhone 15 Pro Max. Presumably, all iPhone 16 models released this fall will be compatible with Apple Intelligence as they are rumored to use Apple’s A18 processor.

For Macs and iPads, you’ll need an M1 or newer processor. That means no Intel Macs no matter how powerful, and no iPads that run A-series processors. Nearly all new Macs from the last few years will qualify but only iPad Pro models from 2021 and the two most recent iPad Air models that have M1 and M2 processors.

The new iOS 18, iPadOS 18, and macOS 15 Sequoia updates have plenty of other features that will be supported by a lot of other hardware, but when it comes to Apple Intelligence…well let’s just say there will be a lot of upgrades this fall.

Apple Intelligence is not coming to other operating systems just yet, either. You won’t find its features in tvOS 18 or visionOS 2, and while HomePod’s screenless state makes most of these features moot anyway, it’s not yet clear if the superior Siri experience will come to HomePod or not.